Edit: Results tabulated, thanks for all y’alls input!

Results fitting within the listed categories

Just do it live

-

Backup while it is expected to be idle @[email protected] @[email protected] @[email protected]

-

@[email protected] suggested adding a real long-ass-backup-script to run monthly to limit overall downtime

Shut down all database containers

-

Shutdown all containers -> backup @[email protected]

-

Leveraging NixOS impermanence, reboot once a day and backup @[email protected]

Long-ass backup script

- Long-ass backup script leveraging a backup method in series @[email protected] @[email protected]

Mythical database live snapshot command

(it seems pg_dumpall for Postgres and mysqldump for mysql (though some images with mysql don’t have that command for meeeeee))

-

Dump Postgres via

pg_dumpallon a schedule, backup normally on another schedule @[email protected] -

Dump mysql via mysqldump and pipe to restic directly @[email protected]

-

Dump Postgres via

pg_dumpall-> backup -> delete dump @[email protected] @[email protected]

Docker image that includes Mythical database live snapshot command (Postgres only)

-

Make your own docker image (https://gitlab.com/trubeck/postgres-backup) and set to run on a schedule, includes restic so it backs itself up @[email protected] (thanks for uploading your scripts!!)

-

Add docker image

prodrigestivill/postgres-backup-localand set to run on a schedule, backup those dumps on another schedule @[email protected] @[email protected] (also recommended additionally backing up the running database and trying that first during a restore)

New catagories

Snapshot it, seems to act like a power outage to the database

-

LVM snapshot -> backup that @[email protected]

-

ZFS snapshot -> backup that @[email protected] (real world recovery experience shows that databases act like they’re recovering from a power outage and it works)

-

(I assume btrfs snapshot will also work)

One liner self-contained command for crontab

- One-liner crontab that prunes to maintain 7 backups, dump Postgres via

pg_dumpall, zips, then rclone them @[email protected]

Turns out Borgmatic has database hooks

- Borgmatic with its explicit support for databases via hooks (autorestic has hooks but it looks like you have to make database controls yourself) @[email protected]

I’ve searched this long and hard and I haven’t really seen a good consensus that made sense. The SEO is really slowing me on this one, stuff like “restic backup database” gets me garbage.

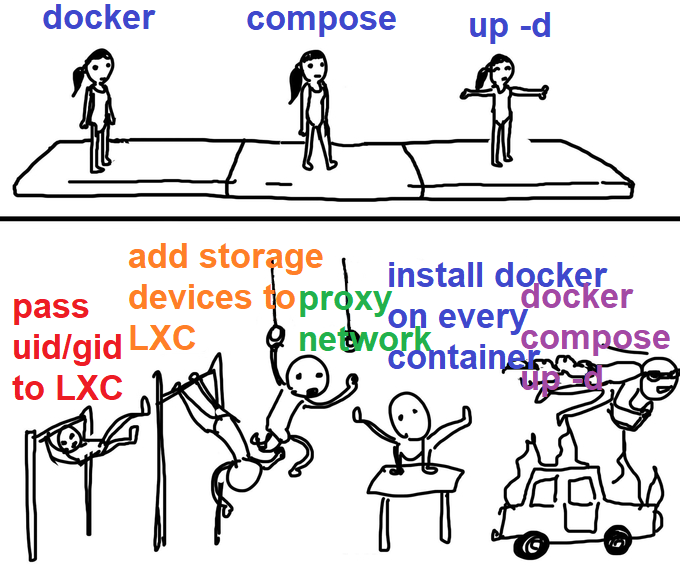

I’ve got databases in docker containers in LXC containers, but that shouldn’t matter (I think).

me-me about containers in containers

I’ve seen:

- Just backup the databases like everything else, they’re “transactional” so it’s cool

- Some extra docker image to load in with everything else that shuts down the databases in docker so they can be backed up

- Shut down all database containers while the backup happens

- A long ass backup script that shuts down containers, backs them up, and then moves to the next in the script

- Some mythical mentions of “database should have a command to do a live snapshot, git gud”

None seem turnkey except for the first, but since so many other options exist I have a feeling the first option isn’t something you can rest easy with.

I’d like to minimize backup down times obviously, like what if the backup for whatever reason takes a long time? I’d denial of service myself trying to backup my service.

I’d also like to avoid a “long ass backup script” cause autorestic/borgmatic seem so nice to use. I could, but I’d be sad.

So, what do y’all do to backup docker databases with backup programs like Borg/Restic?

pg_dumpallon a schedule, then restic to backup the dumps. I’m running Zalando Postgres in kubernetes so scheduled tasks and intercontainer networking is a bit simpler, but should be able to run a sidecar container in your compose fileSo you’re saying you dump on a sched to a <place> and then just let your restic backup pick it up asynchronously?

My backup service runs pg_dumpall, then borg create, then deletes the dump.

Pretty much - I try and time it so the dumps happen ~an hour before restic runs, but it’s not super critical

+1 for long-ass backup script. First dump the databases with the appropriate command. Currently, I have only MariaDB and Postgres instances. Then, I use Borg to backup the database dumps and the docker volumes.

Database SQL dumps compress very well. I haven’t had any problems yet

It’s gon b long ass backup script I think!

I tried to find this on DDG but also had trouble so I dug it out of my docker compose

Use this docker container:

prodrigestivill/postgres-backup-local

(I have one of these for every docker compose stack/app)

It connects to your postgres and uses the pg_dump command on a schedule that you set with retention (choose how many to save)

The output then goes to whatever folder you want.

So have a main folder called docker data, this folder is backed up by borgmatic

Inside I have a folder per app, like authentik

In that I have folders like data, database, db-bak etc

Postgres data would be in Database and the output of the above dump would be in the db-bak folder.

So if I need to recover something, first step is to just copy the whole all folder and see if that works, if not I can grab a database dump and restore it into the database and see if that works. If not I can pull a db dump from any of my previous backups until I find one that works.

I don’t shutdown or stop the app container to backup the database.

In addition to hourly Borg backups for 24 hrs, I have zfs snapshots every 5 mins for an hour and the pgdump happens every hour as well. For a homelab this is probably more than sufficient

Thorough, thanks! I see you and some others are using “asynchronous” backups where the databases backup on a schedule and the backup program does its thing on its own time. That might actually be the best way!

I setup borg around 4 months ago using option 1. I’ve messed around with it a bit, restoring a few backups, and haven’t run into any issues with corrupt/broken databases.

I just used the example script provided by borg, but modified it to include my docker data, and write info to a log file instead of the console.

Daily at midnight, a new backup of around 427gb of data is taken. At the moment that takes 2-15min to complete, depending on how much data has changed since yesterday; though the initial backup was closer to 45min. Then old backups are trimmed; Backups <24hr old are kept, along with 7 dailys, 3 weeklys, and 6 monthlys. Anything outside that scope gets deleted.

With the compression and de-duplication process borg does; the 15 backups I have so far (5.75tb of data) currently take up 255.74gb of space. 10/10 would recommend on that aspect alone.

/edit, one note: I’m not backing up Docker volumes directly, though you could just fine. Anything I want backed up lives in a regular folder that’s then bind mounted to a docker container. (including things like paperless-ngxs databases)

Love the detail, thanks!!

I have one more thought for you:

If downtime is your concern, you could always use a mixed approach. Run a daily backup system like I described, somewhat haphazard with everything still running. Then once a month at 4am or whatever, perform a more comprehensive backup, looping through each docker project and shutting them down before running the backup and bringing it all online again.

Not a bad idea for a hybrid thing, especially people seem to say that a running database backup at least some of the time most of the time with no special shutdown/export effort is readable. And the dedupe stats are really impressive

I mostly use postgres so I created myself a small docker image, which has the postgres client, restic and cron. It also gets a small bash script which executes pg_dump and then restic to backup the dump. pg_dump can be used while the database is used so no issues there. Restic stores the backup in a volume which points to an NFS share on my NAS. This script is called periodically by cron.

I use this image to start a backup-service alongside every database. So it’s part of the docker-compose.yml

Would you mind pastebin-ing your docker image creator file? I have no experience cooking up my own docker image.

I quickly threw together a repository. But please keep in mind that I made some changes to it, to be able to publish it, and it is a combination of 3 different custom solutions that I made for myself. I have not tested it, so use at your own risk :D But if something is broken, just tell me and I try to fix it.

Thanks for taking the time to upload the whole thing!! This is pretty cool because it moves the backup work straight into the container with the db

Sure! I’ll try to do it today but I can’t promise to get to it

I just do the first option.

Everything is pretty much idle at 3am when the backups run, since it’s just me using the services. So I don’t really expect to have issues with DBs being backed up this way.

This, just pgdump properly and test the restore against a different container. Bonus points for spinning as new app instance and checking if it gets along with the restored db.

I guess I’m a dummy, because I never even thought about this. Maybe I got lucky, but when I did restore from a backup, I didn’t have any issues. My containerized services came right back up like nothing was wrong. Though that may have been right before I successfully hosted my own (now defunct) Lemmy instance. I can’t remember, but I think I only had sqlite databases in my services at the time.

Good to know if I need to just throw the running database into borg/restic there’s a chance it’ll come out ok! Def not a dummy, I only found out databases may not like being backed up while running through someone mentioning it offhandedly

I have also been wanting to try borg for at least offsite backups. Currently been using a “long ass backup script” with how little time I currently have.

I’ve replaced my “long ass script” I was using for rsync with a much shorter one that uses borg. 10/10 would recommend.

Not sure how much time it will save because in both cases the stuff that took the most time was figuring out each tool’s voodoo for including/excluding directories from backup.

I’m coming from rsync too, hoping for the same good stuff

I just started using some docker containers I found on Docker Hub designed for DB backups (e.g. prodrigestivill/postgres-backup-local) to automatically dump from the databases into a set folder, which is included in the restic backup. I know you could come up with scripts but this way, I could easily copy the compose code to other containers with different databases (and different passwords etc).

That is nicely expandable with my docker_compose files, thanks for the find!

Snapshot with zfs, backup snapshot.

That’s ok for a database that’s running?

Do you use a ZFS backup manager?

While there’s probably a better way of doing it via the docker zfs driver, I just make a datastore per stack under the hypervisor, mount the datastore into the docker LXC, and make everything bind mount within that mountpoint, then snapshot and backup via Sanoid to a couple of remote ZFS pools, one local and one on zfs.rent.

I’ve had to restore our mailserver (mysql) and nextcloud (postgres) and they both act as if the power went out, recovering via their own journaling systems. I’ve not found any inconsistencies on recovery, even when I’ve done a test restore on a snapshot that’s been backed up during known hard activitiy. I trust both databases for their recovery methods, others maybe not so much. But test that for yourself.

That is straightforward, and if you recovered nextcloud like that it does say something about the robustness!

With restic you can pipe to stdin, so I use mysqldump and pipe it to restic:

mysqldump --defaults-file=/root/backup_scripts/.my.cnf --databases db-name | restic backup --stdin --stdin-filename db-name.sqlThe .my.cnf looks like this:

[mysqldump] user=db-user password="databasepassword"Thanks for the incantation! Its looking like something like this is gonna be the way to do it

I guess the trouble is that you don’t want to read the volumes where the db files are because they’re not guaranteed to be consistent at a given point in time right?

Does the given engine support a backup method/utility that can be used to copy files to some volume on a set schedule?

As far as I know (unless smarter people know), you need a “long ass backup script” to make your own fun on a set schedule. Autorestic and borgmatic are smooth but don’t seem to have the granularity to deal with it. (Unless smarter people know how to make them do, which I may be fishing for lol)

I use rsnapshot docker image from Linuxserver. The tool uses rsync incrementally and does rotation/ prunning for you (e.g. keep 10 days, 5 weeks, 8 months, 100 years). I just pointed it to the PostgreSQL data volume. This runs without interruption of service. To restore, I need to convert from WAL files into a dump… So, load an empty PostgreSQL container on any snapshot and run the dump command.

deleted by creator

That’s wild and cool - don’t have that architecture now but… next time

Borgmatic is an automation tool for Borg. It has hooks for database backups.

https://torsion.org/borgmatic/docs/how-to/backup-your-databases/

Dunno how I missed that in borgmatic, and I see autorestic also has “hooks” but with no database-specific examples. So I can build out what would be in a long ass script just in a long ass borgmatic/autorestic yml!

Glad I could help. 🙂